Many enterprises already possess significant AI capability.

Across the enterprise, the larger barrier to scalable AI value is disconnected execution.

Work still moves through disconnected systems, fragmented workflows, siloed teams, duplicated processes, and operational handoffs that slow decisions long before AI enters the equation. Knowledge sits inside tools, threads, recordings, pages, tickets, and dashboards that rarely function as one operational system.

Atlassian Team 2026 made that problem strategically important.

The strongest signal from the event centered on connected operational context, workflow-native AI, and execution visibility grounded in real enterprise work.

That is why Teamwork Graph deserves executive attention. Operationally, it functions as an enterprise context layer connecting work, knowledge, teams, goals, services, dependencies, decisions, and delivery history so people and AI can operate with clearer visibility across the business.

Nearly every major theme at Team ’26 pointed back to the same idea: AI becomes more useful when enterprise work becomes more connected.

Teamwork Graph revealed the next competitive layer in enterprise AI

Most enterprise AI conversations still focus on models, copilots, agents, prompts, and automation. Those capabilities matter, but Atlassian Team 2026 emphasized a different layer: the relationships between work.

Why context quality matters

The event repeatedly reinforced a broader operational reality. AI becomes substantially more useful when it can operate within connected, high-quality context.

AI tools can generate responses, summarize activity, recommend next steps, and automate repetitive tasks. But in enterprise environments, the usefulness of those outputs depends on the quality of the surrounding context. An AI assistant that cannot understand how a Jira ticket connects to a Confluence decision, how that decision connects to a product goal, how that goal connects to a service dependency, or how that dependency affects delivery risk will remain limited. It may still save time, but it will struggle to support execution at scale.

Teamwork Graph points to a broader answer. Operationally, it attempts to connect projects, tickets, documentation, conversations, goals, services, decisions, people, dependencies, and work history into a usable enterprise context layer. That context layer matters because work rarely breaks down inside one tool. It breaks down between teams, systems, decisions, and handoffs.

AI systems struggle when work is disconnected, context is incomplete, and operational relationships remain invisible. Teamwork Graph represents Atlassian’s attempt to address that fragmentation problem at the level where enterprise work actually happens.

From tools to operational systems

This direction also reflects a broader platform shift. Jira, Confluence, Loom, Rovo, Atlas, Focus, and Service Collection are increasingly positioned as connected operational systems rather than isolated tools.

Each product still serves a clear function, but the larger value emerges when work, knowledge, communication, planning, service delivery, and AI assistance reinforce each other.

Conversations and demonstrations surrounding Team ’26 reinforced the same pattern. Teamwork Graph repeatedly surfaced as more than an abstract platform concept. Customers responded strongly to practical workflow demonstrations because they could see connected work functioning in real time. The strongest moments were often the moments when attendees connected the demonstration back to familiar visibility gaps inside their own organizations.

The same pattern appeared around the System of Work Accelerator. When customers saw outputs connected to workflow maturity, collaboration patterns, and operational visibility, the discussion moved quickly from product interest to organizational diagnosis. The question became less about what Atlassian can do in theory and more about where disconnected execution is already limiting the enterprise today.

One of the clearest shifts emerging from Team ’26 is the market conversation moving beyond the rise of AI agents alone. Enterprise AI value increasingly depends on connected operational context.

Why disconnected execution is limiting enterprise AI value

Many enterprises already struggle with:

- disconnected workflows

- duplicated effort

- inconsistent documentation

- fragmented service operations

- siloed delivery teams

- weak execution visibility

These problems create friction before AI enters the workflow.

AI does not automatically remove that friction. In many cases, it exposes and amplifies it.

Connected workflows give AI better operational context. Fragmented workflows force AI to operate around gaps, incomplete relationships, and inconsistent knowledge. That affects trust, usefulness, and adoption scalability.

The core challenge now centers on whether the operating environment gives AI enough context to support meaningful work.

Why Rovo reflects the broader shift

The shift toward connected execution also explains why Rovo generated so much attention throughout Team ’26.

Rovo is often discussed in relation to enterprise search, agents, summarization, and workflow support. But its strategic relevance is broader than chatbot-style interaction. Rovo becomes more important when it functions as a context-aware workflow layer that helps people find knowledge, understand activity, coordinate execution, and move through work with less friction.

The highest-value use cases are not limited to individual productivity. They emerge when AI supports the way teams coordinate, plan, deliver, resolve issues, and make decisions across shared systems of work.

The customer conversations at Team ’26 reinforced this point. Attendees asked practical questions about Rovo usage, adoption, governance, and workflow fit. Interest centered less on AI novelty and more on operational applicability. Demonstrations resonated when they showed AI functioning inside real workflows rather than sitting beside them as another disconnected tool.

The “make AI real in 90 days” message appears to have resonated for the same reason. It translated AI from an abstract ambition into a near-term operational challenge. Leaders want to know where to start, what workflows to prioritize, what governance must be in place, and how to connect AI to work people already do.

The organizations that realize the most value from enterprise AI will likely be the organizations that reduce operational fragmentation first. That makes workflow visibility and connected execution increasingly strategic.

Atlassian is shifting from work management toward execution visibility

Atlassian began as a platform for organizing and tracking work. Team ’26 revealed how far that positioning has evolved.

That shift changes the executive conversation. The company is increasingly focused on connecting strategic goals, project delivery, service operations, documentation, communication, AI assistance, and workflow coordination into a more visible operational system.

The cost of coordination overhead

That shift reflects a broader enterprise problem: organizations lose significant execution capacity to coordination overhead. Teams spend time searching for information, reconstructing decisions, reconciling reports, and managing dependencies across fragmented systems. Leaders often lack consistent visibility into how work connects across the business.

Atlassian’s broader System of Work narrative speaks directly to that challenge. The emphasis on connected teamwork, shared visibility, and alignment between strategy and execution reflects where enterprise platform value is moving.

The same pattern appeared in booth and theater conversations as well. The System of Work Accelerator generated strong engagement because it gave teams a concrete way to examine the maturity of their Atlassian environment. Customers related quickly to identified workflow gaps because those gaps reflected known operating challenges: unclear ownership, fragmented visibility, disconnected workstreams, inconsistent collaboration patterns, and difficulty translating platform usage into business value.

Theater conversations around operationalizing connected execution also pointed to a broader market need. Leaders are trying to understand how AI fits into real workflows without creating more complexity. They want AI to reduce coordination friction, improve visibility, and support better decisions. They do not want another layer of disconnected experimentation.

The strongest response at Team ’26 often came from conversations that translated AI into workflow visibility, coordination improvement, and connected execution. This appears to reflect where the market conversation is heading next.

The next evolution of enterprise platforms will likely center on making enterprise execution more visible, connected, and context-aware.

Why cloud modernization is becoming a connected execution decision

Cloud modernization has often been framed as an infrastructure decision. For many Atlassian customers, that framing is becoming too narrow.

At Team ’26, cloud conversations increasingly centered on:

- operational interoperability

- governance continuity

- AI scalability

- ecosystem readiness

- execution visibility

Those concerns extend well beyond hosting.

For organizations still operating in legacy or highly customized environments, operational fragmentation often persists through outdated integrations, inconsistent workflows, local workarounds, and limited visibility across systems. As AI-enabled workflows become more important, those limitations become harder to ignore.

Why AI increases modernization pressure

Rovo and broader AI adoption may accelerate cloud decision-making because AI value depends on connected, governed, and current operational context. If work remains fragmented across outdated systems, AI adoption becomes more difficult to scale and govern effectively.

This is especially important in regulated industries, where leaders must evaluate accountability, transparency, permissions, data access, human oversight, and operational continuity alongside technical readiness.

The cloud discussion increasingly centers on operational connectivity, AI-enabled workflows, scalable execution visibility, enterprise interoperability, and future operational capability.

For many enterprises, cloud modernization increasingly reflects a decision about how connected and operationally visible the organization can become.

What enterprise leaders should focus on next

Enterprise leaders should avoid treating AI adoption as a standalone technology initiative. The more strategic move is to improve the operational systems surrounding execution itself.

Enterprise leaders should focus on:

- visibility across execution

- coordination friction

- workflow governance

- operational connectivity

Atlassian Team 2026 made that priority clearer. AI-native execution requires workflows, knowledge, teams, services, goals, and governance to operate with enough connection for AI to support human judgment inside real work.

1. Identify visibility gaps across execution

Leaders should begin by assessing where work loses visibility across the organization.

Disconnected workflows, siloed operational data, fragmented knowledge systems, duplicated work, and dependency blind spots directly affect AI usefulness, workflow efficiency, operational trust, and adoption scalability.

The priority is to identify where the organization lacks shared context. Which teams cannot see related work? Which decisions are difficult to trace? Which reports require manual reconciliation before leaders can trust them?

AI will inherit the quality of the environment around it. If operational visibility remains inconsistent, AI-supported execution will remain inconsistent as well.

2. Reduce coordination friction inside high-impact workflows

Enterprise leaders should prioritize workflows where coordination friction slows meaningful work.

These are often workflows where cross-functional dependencies create delays, visibility is inconsistent, operational handoffs slow execution, or coordination overhead remains high. Product delivery, service operations, onboarding, portfolio planning, and enterprise change initiatives are common examples.

The objective is to connect work in ways that reduce unnecessary effort and improve decision flow. Leaders should look beyond tool adoption alone and focus on whether teams and AI systems can clearly understand the relationships between goals, decisions, dependencies, risks, and outcomes.

When those relationships become clearer, AI can support execution with greater reliability and context awareness.

3. Build governance into connected workflows early

AI governance cannot remain separate from the workflows where AI will be used. It must be built into the way work moves.

This requires organizations to define accountability, workflow transparency, operational governance, adoption enablement, human oversight, and sustainable operating practices early.

Leaders need clear guidance on where AI can support work, where human judgment remains required, and how AI-enabled workflows will be reviewed and measured over time.

When governance is embedded into connected workflows, adoption becomes more scalable, trustworthy, and sustainable.

The enterprises that realize the greatest value from AI-native execution will likely be the organizations that build the clearest operational visibility and strongest workflow connectivity first.

The clearest signal from Atlassian Team 2026

Atlassian Team 2026 reinforced a broader shift toward connected execution and AI systems grounded in real operational work.

The next competitive advantage in enterprise AI may come from building operational environments where work, knowledge, decisions, goals, and workflows are connected clearly enough for AI to participate meaningfully inside execution.

That was the clearest strategic signal emerging from Atlassian Team ’26.

See where disconnected work is limiting execution visibility

The System of Work Accelerator helps organizations uncover workflow fragmentation, identify operational visibility gaps, evaluate collaboration maturity, and prepare Atlassian Cloud environments for AI-native workflows.

Use the free assessment to understand how connected your Atlassian workflows really are and where better visibility could improve execution.

Frequently asked questions about Atlassian Team 2026

What happened at Atlassian Team 2026?

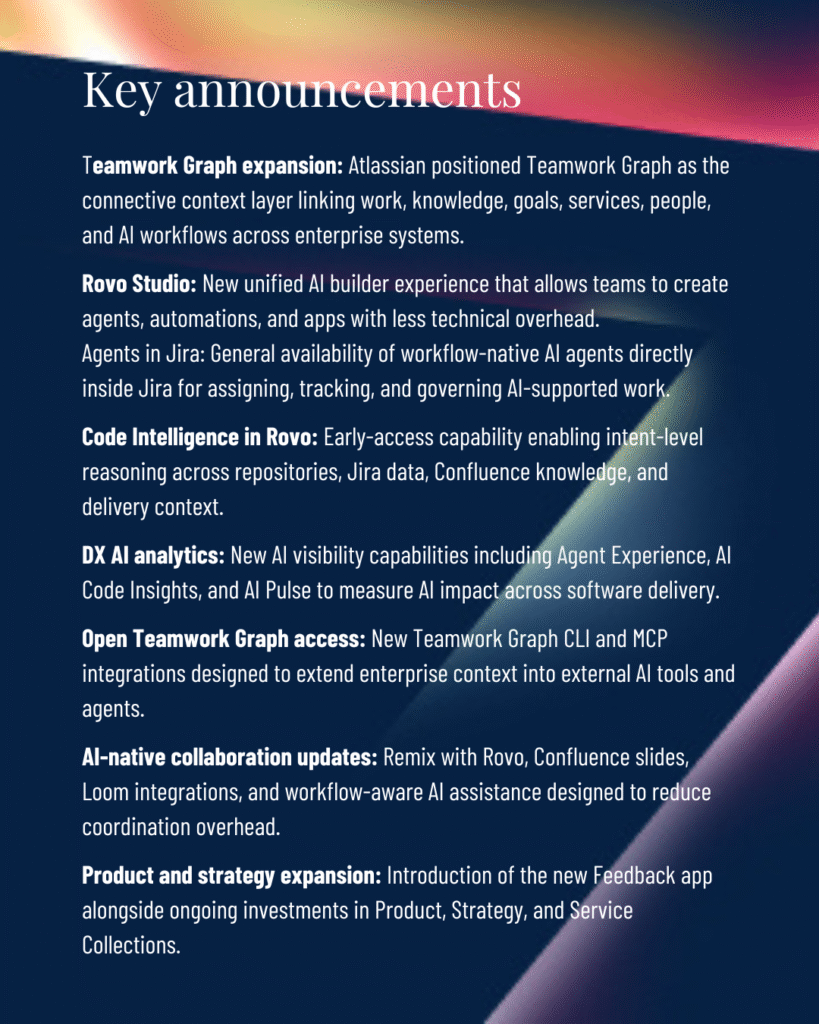

Atlassian Team 2026 focused heavily on AI-native execution, connected workflows, and operational visibility. Major announcements highlighted Teamwork Graph, Atlassian Rovo, workflow-native AI agents, and new approaches for connecting enterprise knowledge, services, goals, and delivery workflows into a shared operational context.

What is Atlassian Teamwork Graph?

Teamwork Graph is Atlassian’s connected enterprise context layer that links work, people, knowledge, goals, services, and operational history across systems. It helps AI and human teams operate with better context, visibility, and relationship awareness across enterprise workflows.

Why is Teamwork Graph important for enterprise AI?

Enterprise AI systems perform better when they can access connected operational context. Teamwork Graph helps AI tools understand relationships between projects, documentation, goals, dependencies, and workflows, which improves coordination, search, summarization, governance, and execution support.

What is Atlassian Rovo?

Atlassian Rovo is an AI-powered enterprise search and workflow assistance platform designed to help teams find knowledge, summarize activity, coordinate work, and support execution across Atlassian products and connected enterprise systems.

How does Atlassian Rovo support enterprise workflows?

Rovo supports enterprise workflows by helping teams retrieve operational knowledge, surface relevant context, summarize activity, identify relationships between work items, and coordinate execution more efficiently across connected systems and teams.

Why are connected workflows important for AI adoption?

Connected workflows improve AI usefulness by giving AI systems access to more complete operational context. Fragmented systems, inconsistent documentation, and disconnected workflows reduce trust, limit visibility, and make enterprise AI harder to scale effectively.

How is Atlassian changing from work management to execution visibility?

Atlassian is increasingly positioning its platform around connected execution, shared operational visibility, workflow coordination, and alignment between strategy and delivery. The focus is shifting toward helping enterprises understand how work connects across teams, systems, services, and goals.

Why does cloud modernization matter for AI-native execution?

Cloud modernization increasingly affects operational connectivity, interoperability, governance, and AI readiness. Organizations operating in fragmented or heavily customized environments may struggle to scale AI-enabled workflows because disconnected systems limit visibility, context quality, and governance continuity.