PLEASE NOTE: Atlassian Rovo is evolving rapidly as Atlassian expands AI capabilities across Jira, Confluence, and connected enterprise tools. Features, integrations, availability, and workflows may vary based on your Atlassian products, subscription tier, admin configuration, and current Atlassian releases.

Getting started with Atlassian Rovo

What is Atlassian Rovo?

Atlassian Rovo is Atlassian’s AI solution for enterprise search, chat, agents, and workflow assistance. It helps teams find knowledge across Atlassian and connected third-party tools, ask questions in natural language, summarize information, and use AI agents to support work inside tools like Jira and Confluence.

How do I access Atlassian Rovo in my Atlassian Cloud instance?

Users can access Atlassian Rovo through supported Atlassian Cloud products when Rovo is available and activated for their site. Once enabled, users can open Rovo Chat via the chat icon in the bottom-right corner, search from the top navigation bar, or utilize Rovo Studio. Access depends on your organization’s Atlassian plan, admin settings, product availability, and permissions.

Is Atlassian Rovo included in my Jira or Confluence subscription plan?

Yes. Rovo in all its forms is automatically included in every paid Cloud plan, including Jira, Confluence, and Jira Service Management. The range of features depends on your Atlassian Cloud plan, product configuration, and Atlassian’s current packaging. Organizations should verify eligibility through Atlassian Administration or current Atlassian documentation.

What is the difference between Rovo AI Search, Rovo Chat, and Rovo Agents?

Rovo AI Search helps users find information across Atlassian and connected third-party tools. Rovo Chat lets users ask natural-language questions, summarize information, and get contextual assistance. Rovo Agents are configurable AI teammates designed to help users complete specific tasks, support workflows, and take action based on defined instructions and knowledge sources.

What is the difference between Atlassian Intelligence and Atlassian Rovo?

Atlassian Intelligence and Atlassian Rovo are closely related, but they are not the same thing. Atlassian Intelligence is the underlying AI capability embedded within Jira, Confluence, and other Atlassian products, while Rovo is Atlassian’s broader AI solution for enterprise search, chat, agents, and cross-platform workflow assistance across both Atlassian and connected third-party tools.

Rovo AI Search and knowledge discovery

How does Rovo AI Search work?

Rovo AI Search uses natural-language search to help users find information across Atlassian products and connected third-party apps. It draws from indexed sources, permissions, relevance signals, and work context to surface results that are more useful than keyword matching alone.

How is Atlassian Rovo different from Jira or Confluence search?

Jira and Confluence search are primarily focused on content within those individual products, and will generally locate exact text matches with limited search operators. Atlassian Rovo uses natural language and semantic understanding to provide a broader AI-powered search experience that can connect knowledge across Jira, Confluence, and approved third-party tools, helping users find information across a larger work context.

Why can’t I find all Jira issues or Confluence pages in Rovo AI Search?

Rovo AI Search only surfaces information the user is allowed to access and that has been indexed or connected properly. Missing results may be caused by permissions, connector configuration, indexing delays, product availability, deleted content, or content that is outside the connected knowledge sources.

How does Atlassian Rovo decide what content appears in search results?

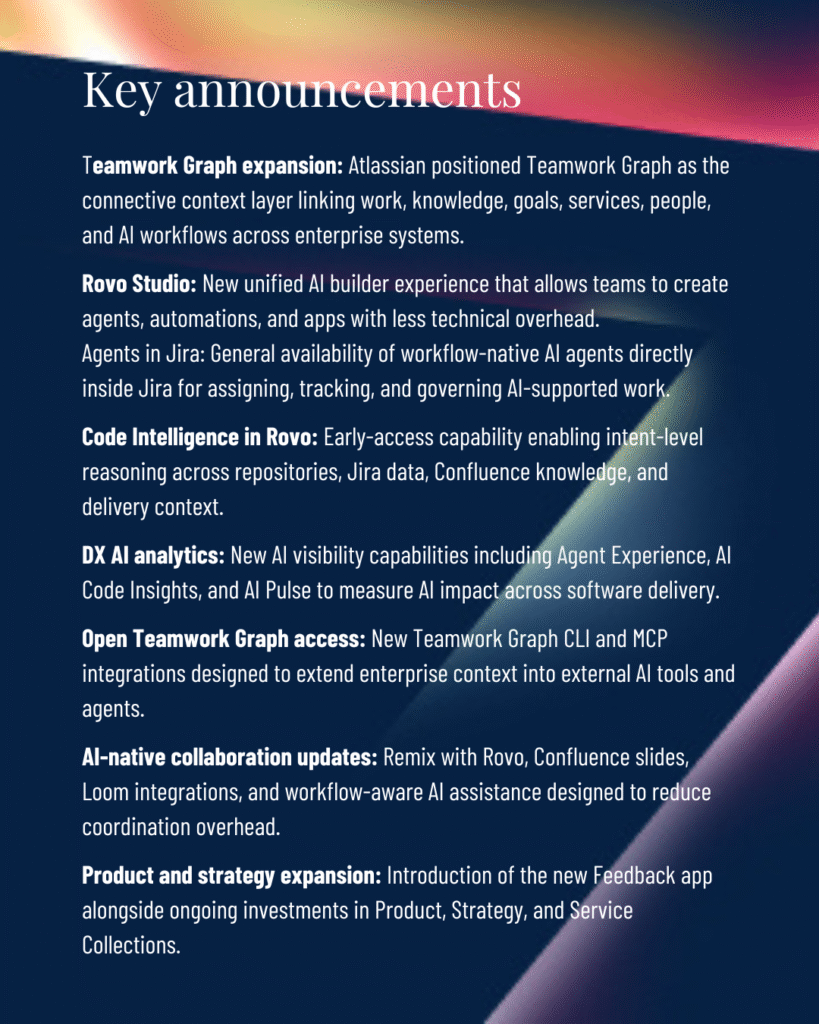

Atlassian Rovo uses factors such as search intent, content relevance, user permissions, available connectors, and indexed knowledge sources to determine which results appear. It leverages the Teamwork Graph—Atlassian’s proprietary data layer—to surface the most relevant information based on context. Users should only see content they are authorized to access under existing Atlassian and connected-app permissions.

How often does Atlassian Rovo refresh or index content?

Atlassian Rovo continuously indexes content across Atlassian tools like Jira and Confluence. For connected third-party platforms such as Google Drive, SharePoint, and Slack, Rovo uses admin-configured connectors to regularly sync and update searchable content.

Can Atlassian Rovo connect to Slack, Google Drive, SharePoint, or other external tools?

Yes. Atlassian Rovo can connect to approved third-party tools through Rovo connectors and the Atlassian Teamwork Graph. Supported connections include tools such as Google Drive, SharePoint, Slack, GitHub, Microsoft Teams, and other enterprise apps. If you find that a connector is available from Atlassian, but not in your instance, check with your Atlassian Cloud admin.

Rovo security, permissions, and governance

Does Atlassian Rovo respect Jira and Confluence permissions?

Yes. Atlassian Rovo is designed to respect existing permissions in Jira, Confluence, and connected tools. Users should only see content they already have permission to access, which makes permissions hygiene an important part of any Rovo rollout.

Is company data used to train Atlassian Rovo or Atlassian Intelligence models?

Atlassian states that customer data sent to AI models through Rovo is used to generate a response, not to train third-party AI models. Organizations should still review Atlassian’s current data handling, privacy, and residency documentation to confirm how their data is processed in their specific environment.

What security and compliance considerations should organizations understand before using Atlassian Rovo?

Organizations should review permissions, connected data sources, admin controls, AI usage policies, data residency requirements, and compliance obligations before deploying Rovo broadly. Rovo respects existing permissions, and customer data is never used to train LLMs. It’s important to note that the Atlassian Cloud Platform complies with SOC 2 and ISO 27001, but is not HIPAA compliant at this time.

How can enterprises govern AI usage in Atlassian Rovo?

Enterprises can govern Atlassian Rovo through admin controls, permissions management, and AI governance settings within Atlassian Administration and Rovo Studio. Organizations can control access to AI features, manage who can create Rovo Agents, and align Rovo usage with existing security and compliance policies.

Rovo Chat, AI responses, and reliability

What is Rovo Chat and how does it work?

Rovo Chat is an AI assistant built into Atlassian tools that functions like other popular GenAI chatbots like ChatGPT and Claude, so the learning curve should be short. Rovo Chat uses available work context from Atlassian and connected third-party apps. Users can ask questions, summarize information, draft content, find relevant work, and get help based on content they are permitted to access.

How accurate are Atlassian Rovo responses?

Rovo responses depend on the quality, freshness, and completeness of the information it can access. While useful for fast discovery and drafting, Rovo struggles with complex counting tasks and deterministic, multi-step workflows. Like other AI systems, Rovo should be used with human review, especially for business-critical, compliance-sensitive, or customer-facing decisions.

Why does Atlassian Rovo sometimes generate incomplete or outdated information?

Rovo may generate incomplete or outdated answers when source content is incomplete, stale, poorly structured, not yet indexed, or unavailable because of permissions or connector limitations. Better knowledge hygiene, current documentation, and well-managed permissions can improve answer quality. And, Atlassian Rovo is subject to the same occasional issues other natural language AI engines face, including hallucinations.

Can Atlassian Rovo execute actions in Jira or only provide answers?

Rovo can support actions and workflow assistance in Jira through supported agents, automations, and configured workflows. Depending on your organization’s security and governance requirements, Rovo Agents can either operate autonomously or require human approval before executing sensitive or irreversible actions.

How does Jira Intelligence work with Atlassian Rovo?

Rovo extends AI-powered capabilities inside Jira (Jira Intelligence) by going beyond immediate issue-level tasks to help users search across systems, summarize information, automate routine tasks, and connect work across Jira, Confluence, and other integrated tools.

How does Confluence Intelligence work with Atlassian Rovo?

Atlassian Rovo powers many of the AI capabilities within Confluence, including intelligent search, content generation, and workflow assistance. By connecting knowledge across Confluence, Jira, and integrated third-party tools, Rovo helps teams surface relevant information and act on it more efficiently.

Rovo Agents and automation

What are Atlassian Rovo Agents?

Rovo Agents are configurable AI teammates that help teams reduce repetitive work, automate tasks, and move work forward more efficiently. They can assist with activities like summarizing information, creating or updating Jira issues and Confluence pages, supporting workflows, and surfacing insights across Atlassian and connected third-party tools.

How do I create a Rovo Agent?

Users with the right permissions can create a Rovo Agent by opening Atlassian Studio from the app switcher, selecting Agents, and choosing Create Agent. From there, define the agent’s purpose, configure its instructions and knowledge sources, and add skills to support specific workflows and tasks.

What are the best real-world use cases for Rovo Agents?

Common Rovo Agent use cases include summarizing Jira issues, generating Confluence updates, assisting with support triage, preparing release notes, analyzing customer feedback, creating test cases, answering internal knowledge questions, and helping teams reduce repetitive coordination work.

Can Rovo Agents take actions in Jira or only provide recommendations?

Rovo Agents can support actions in Jira when configured through supported workflows, skills, plugins, or Atlassian Automation. These can include organizing backlogs, creating epics, updating trackers, and logging work, for example. The specific actions available depend on agent configuration, permissions, product capabilities, and admin settings.

How does Rovo Automation work in Jira Service Management and Jira Software?

Rovo Automation connects AI capabilities to Jira Software and Jira Service Management workflows, helping teams reduce repetitive work and automate common tasks. Organizations can use Rovo actions and AI agents to summarize issues, support ticket workflows, generate updates, assist with software delivery, and build automation rules using natural-language prompts.

What are the current limitations of Atlassian Rovo and Rovo Agents?

Atlassian Rovo and Rovo Agents are designed to improve search, automation, and workflow efficiency across the Atlassian ecosystem, but they still require human oversight and well-structured data. Organizations may encounter limitations around complex cross-platform automations, large-scale data processing, advanced customization, and governance as AI usage expands across teams.

Atlassian Rovo adoption and enterprise use cases

What are the best Atlassian Rovo use cases for software development teams?

Software development teams can use Atlassian Rovo to accelerate planning, reduce repetitive engineering work, lessen costly context switching, and surface knowledge across tools like Jira, Confluence, Bitbucket, and VS Code. Common use cases include implementation planning, code generation assistance, automated pull request reviews, release note creation, backlog organization, and contextual search across connected systems.

How are organizations using Atlassian Rovo for IT support or incident management?

Organizations can use Atlassian Rovo to summarize incidents, support ticket triage, surface relevant knowledge articles, assist service agents, automate repetitive updates, and help teams connect support activity with related Jira, Confluence, and third-party information.

How can organizations improve adoption of Atlassian Rovo across teams?

Organizations can improve Atlassian Rovo adoption by integrating AI into existing workflows, establishing clear governance, and focusing on practical, high-value use cases. Successful rollouts typically combine executive support, hands-on Rovo onboarding training, clean and well-structured data, internal AI champions, and ongoing feedback to help teams use Rovo effectively in their daily work.

What does a successful Atlassian Rovo rollout look like?

A successful Atlassian Rovo rollout typically starts with targeted, high-value use cases before expanding across teams and workflows. The most effective deployments combine executive sponsorship, practical training, clear governance, measurable adoption goals, and ongoing feedback to help teams integrate Rovo naturally into their daily work. Working with an experienced Atlassian Partner can make for a quick and successful Rovo rollout.