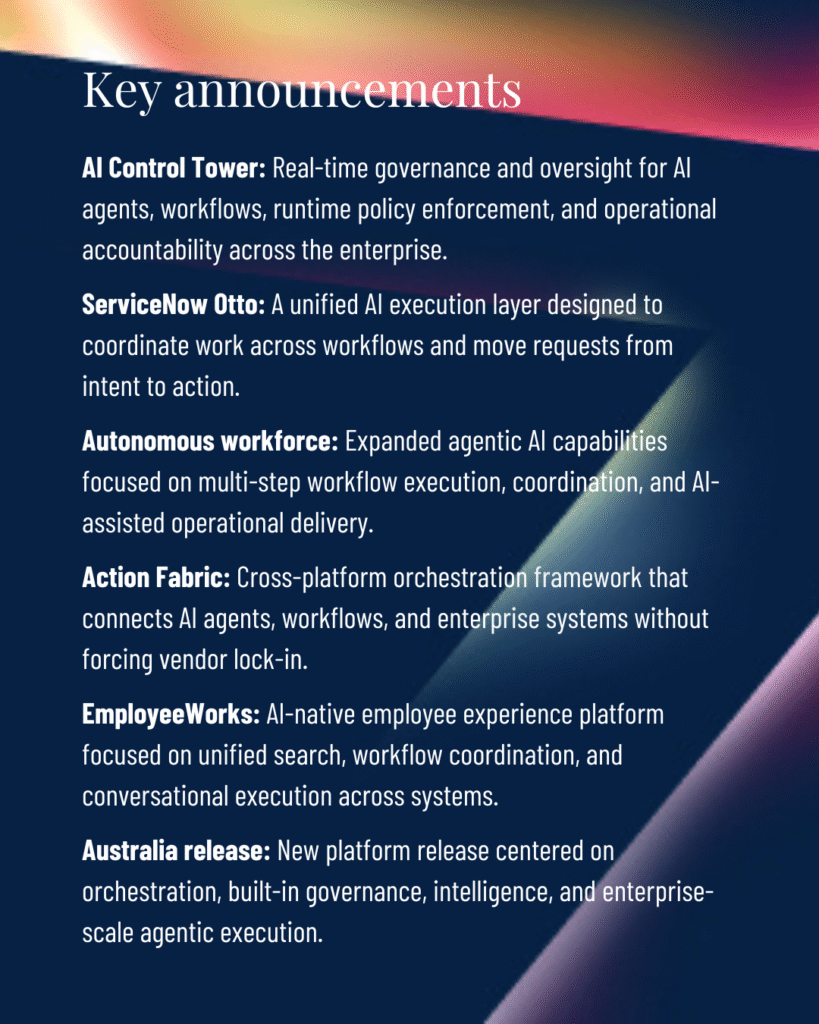

Most events like this are all about announcing new features. And there were some exciting ones, no doubt. But ServiceNow Knowledge 2026 centered on much more important topics: enterprise execution systems, orchestration, governance, and AI-enabled operational coordination across the business.

The most important conversations in Las Vegas focused on how AI moves through real work: how requests become action, how systems coordinate across platforms, how governance operates inside workflows, and how leaders scale AI without creating more fragmentation than value.

That marks a meaningful shift. Enterprise AI has moved beyond the stage where isolated copilots, productivity demos, and disconnected experiments can carry the strategy. Leaders now face a more complex question: how do they turn AI capability into governed execution at scale?

Many organizations already have multiple LLM investments, growing portfolios of AI pilots, and increasing pressure to prove value.

They also have legacy systems, fragmented knowledge environments, inconsistent employee experiences, and governance models that were designed for slower technology cycles. As agentic AI moves closer to business execution, those operating gaps become harder to ignore.

Knowledge 2026 brought that reality into focus. The event reflected a market shift toward execution architecture, orchestration, and governance as the foundation for enterprise-scale AI adoption.

For enterprise leaders, that shift carries a clear implication. The next phase of AI value will depend on how well organizations redesign workflows, decision paths, accountability structures, and adoption systems around AI-enabled execution.

Enterprise AI is moving from assistance to execution

The dominant signal from Knowledge 2026 was the movement from AI as an assistance layer to AI as part of the enterprise execution layer.

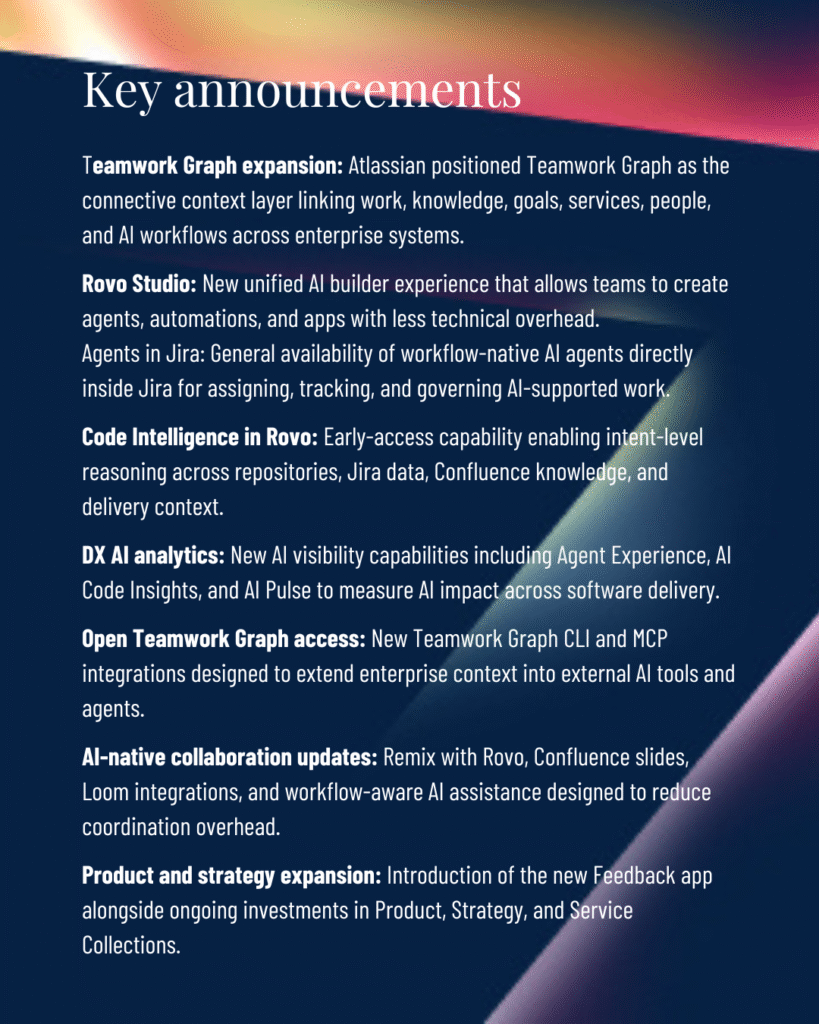

ServiceNow’s messaging around the “system of action,” autonomous workforce concepts, agentic business, Action Fabric, AI specialists, and workflow agents all pointed in the same direction. Taken together, these announcements reflected a larger market shift: AI is moving deeper into the systems where work happens.

That shift matters because the first wave of enterprise AI largely focused on helping individuals move faster.

Employees could summarize information, draft content, search knowledge, or complete isolated tasks with less manual effort. Those capabilities created useful gains, but they left the larger operating model mostly intact.

The next wave changes the pattern. AI now sits closer to workflows, approvals, service delivery, employee interactions, and cross-functional coordination. It can move work from request to resolution, guide decisions across systems, and connect intent to action in ways that reshape how organizations operate.

That shift creates a different standard for enterprise AI maturity. Success increasingly depends on whether AI capabilities function effectively inside the execution systems that determine speed, quality, accountability, and business outcomes.

That reality showed up clearly in customer conversations at Knowledge 2026. Leaders asked fewer exploratory questions about theoretical AI capability and focused more heavily on operational execution challenges.

Questions increasingly centered on:

- orchestration across systems

- interoperability across AI ecosystems

- responsible execution at scale

Those questions reveal where the market is going. Enterprise buyers increasingly understand that AI value comes from coordinated execution. They want to know how AI fits into the architecture of work and how it interacts with existing platforms.

The interface is becoming secondary to the execution layer

The interface also becomes less important in this model. Conversational experiences still matter, especially when they simplify access to information and action. But the more strategic question sits underneath the interface: what execution layer receives the request, interprets the intent, coordinates systems, applies policy, and moves the work forward?

That is where operating model transformation begins. Organizations seeing the most value are redesigning execution systems around AI-enabled workflows. They are clarifying where automation applies, where human judgment remains essential, how approvals change, how escalation works, and how adoption becomes part of the workflow rather than a separate change effort.

As enterprises scale AI-enabled execution, another challenge becomes more visible: fragmented AI ecosystems are creating operational complexity.

Orchestration is becoming the enterprise AI control layer

One of the most important post-Knowledge 2026 issues is the growing complexity of multi-LLM environments.

Many organizations now operate in a fragmented AI landscape. They may have investments in OpenAI, Claude, Gemini, embedded AI features inside major enterprise platforms, internally developed agents, and emerging use cases owned by different functions.

Each capability may create value in isolation. Together, they can create architecture uncertainty, integration fatigue, inconsistent user experiences, and governance gaps.

Enterprises are accumulating AI capabilities faster than they are building operational coordination around them.

That creates a strategic problem. Leaders want flexibility, continuity, and coordinated employee experiences across increasingly fragmented AI environments. They also want to preserve existing investments without rebuilding workflows every time the model market changes.

Why orchestration is becoming strategic infrastructure

ServiceNow’s orchestration direction speaks directly to this pressure. Action Fabric, workflow orchestration, execution coordination, and platform-of-platforms architecture all point toward a model where ServiceNow helps coordinate action across systems rather than forcing every piece of work into one isolated environment.

That idea resonated because many organizations have learned that modernization cannot depend on endless migration projects. Large enterprises already have valuable content, data, workflows, and knowledge stored across environments such as SharePoint, Confluence, ServiceNow, HR systems, IT systems, and other business platforms. Moving everything into one place can create disruption, cost, and resistance.

A more practical model is emerging:

- preserve existing systems that still create value

- orchestrate workflows across environments

- leave knowledge where it already lives

- reduce unnecessary migration friction

- create more unified employee experiences

In this model, organizations can modernize execution without forcing large-scale reconstruction projects that disrupt users, workflows, and operational continuity.

This is a critical implementation insight. AI operating model maturity will increasingly depend on orchestration layers that support flexible model integration, federated knowledge architectures, and long-term operational adaptability.

Organizations that coordinate orchestration strategically can reduce integration risk, preserve architectural flexibility, and create more durable foundations for AI-enabled execution. Organizations that deploy AI initiatives independently across functions often create rising complexity, duplicative effort, uneven adoption, and weaker governance.

The next enterprise AI advantage may come less from the models organizations buy and more from how effectively they orchestrate execution around them. A strong orchestration layer stabilizes how work gets done across changing AI ecosystems, allowing organizations to integrate multiple models, connect existing platforms, apply governance consistently, and preserve operational continuity as underlying technologies evolve.

That orchestration challenge naturally raises a second-order issue. As AI systems begin acting inside workflows, governance must move closer to execution.

Governance is becoming operational infrastructure

Governance emerged as one of the defining enterprise AI themes at Knowledge 2026 because agentic AI changes the risk profile of AI adoption.

When AI primarily generated content or surfaced insights, governance could focus heavily on acceptable use, data handling, model access, and review processes. Those controls remain important. But AI-enabled execution introduces a broader challenge: how do organizations govern systems that can trigger actions, route work, recommend decisions, escalate issues, and coordinate across business processes?

That question moves governance from policy documentation into operational infrastructure.

Governance now operates inside workflows

ServiceNow’s emphasis on Control Tower, runtime governance, AI oversight, policy enforcement, auditability, operational controls, and governed autonomy reflects this shift.

Enterprise AI governance now needs to operate directly in the flow of work.

That includes:

- defining when humans intervene

- clarifying escalation paths

- logging decisions and actions

- enforcing operational policy

- maintaining accountability for outcomes

That requires organizations to extend governance across compliance, risk, legal, data, workflow design, platform architecture, role definition, service delivery, and adoption planning so operational controls function inside the execution system itself.

The reason is simple: autonomous and semi-autonomous systems can create operational risk when accountability remains unclear. A workflow agent may accelerate work, but leaders still need to know what decisions it can make, what evidence it uses, when it stops, when it escalates, and who owns the business result.

Conversational interfaces may improve employee access and workflow speed, but enterprises still need controls around sensitive data, role-based access, approved actions, and escalation paths.

Governance and orchestration therefore become inseparable. Orchestration determines how work moves. Governance determines how that movement remains safe, accountable, transparent, and aligned to enterprise policy.

Human accountability remains essential

Human judgment remains central to this model. AI can support decision flow, reduce manual effort, surface context, and coordinate action, but organizations still need people to define priorities, resolve ambiguity, manage exceptions, and own business accountability. Effective governance clarifies that relationship rather than treating automation as a substitute for responsibility.

This also has direct implications for adoption. Employees need to understand where AI fits, when to trust it, when to intervene, and how their roles change as workflows become more AI-enabled. Leaders need enablement systems that help people use AI with confidence while maintaining the judgment and accountability their work requires.

AI is changing how enterprises think about operating capacity

Knowledge 2026 also reflected a more direct conversation about operating capacity. Executives are increasingly evaluating AI through the lens of scalability, productivity economics, service demand, and workforce leverage. In many functions, the question is becoming more concrete: how can the organization handle more work, faster response expectations, and greater complexity without expanding headcount at the same rate?

That shift requires careful leadership. AI-enabled execution can reduce repetitive work, improve service speed, and help teams focus human effort where judgment matters most. It can also reshape job design, staffing assumptions, governance expectations, and workforce adaptation priorities as enterprises redesign workflows around AI-supported execution.

The market is competing on execution systems

This is why ServiceNow’s Knowledge 2026 direction matters. The announcements collectively pointed toward execution coordination, governed AI systems, workflow integration, and enterprise-scale orchestration. The strategic message was larger than any one product feature: the enterprise AI market is moving from isolated AI experiences toward coordinated systems of action.

That shift changes how leaders should think about competitive advantage. Enterprise value will increasingly depend on the operating systems that turn AI capability into coordinated execution. Organizations that build governance into execution systems can move faster with more control, scale AI use cases with clearer accountability, and adapt operating models without destabilizing the business.

This is the emerging AI operating model: flexible at the model layer, stable at the orchestration layer, governed at runtime, and grounded in human accountability.

Enterprises increasingly need implementation partners who understand orchestration strategy, workflow integration, governance architecture, operating model design, and adoption support as interconnected parts of the same transformation.

That shift creates practical priorities for leaders now.

What enterprise leaders should prepare for now

Knowledge 2026 gave enterprise leaders a clear view of what comes next. The organizations that move effectively will prepare their architecture, governance, workflows, and workforce for AI-enabled execution rather than treating agentic AI as another application rollout.

CIOs and technology leaders: build for orchestration early

Technology leaders should assume multi-LLM environments will become the norm. A durable AI strategy needs room for multiple models, embedded AI capabilities, changing vendor relationships, and evolving enterprise platforms.

That means orchestration strategy should begin early.

Technology leaders should prioritize:

- interoperable workflow infrastructure

- governance embedded into execution systems

- scalable integration patterns

- architecture that supports operational adaptability

Leaders also need to identify where AI-enabled work will cross systems, where existing architecture creates friction, and where fragmented AI deployments could create inconsistent experiences and disconnected governance. The goal is to establish architecture patterns that can scale across business functions as AI ecosystems continue evolving.

Operations and delivery leaders: redesign workflows around human-AI collaboration

Operations and delivery leaders should focus on how AI changes the movement of work.

Agentic AI creates value when it reduces decision friction, accelerates resolution, improves service consistency, and helps teams act with better context. That requires workflow redesign. Leaders need to examine where work stalls, where handoffs break down, where approvals create delay, and where employees lack the information needed to act confidently.

Modern execution systems should clarify the relationship between human and AI work. AI can route, summarize, recommend, retrieve, trigger, and coordinate. People still guide priorities, resolve exceptions, apply judgment, and own outcomes. That division of responsibility must be designed intentionally rather than left to informal adoption.

Operationalizing governance also becomes part of workflow modernization. Controls, escalation paths, audit trails, and approval logic should live inside the execution flow so teams can move faster without creating unmanaged risk.

Transformation and workforce leaders have a central role in this next phase because AI-enabled execution changes behavior, roles, decision patterns, and trust.

Adoption requires practical enablement that helps people understand how AI fits into their work, what decisions remain human-led, and how accountability evolves as workflows become more automated. Leaders should prepare operating models for continuous adaptation through updated role definitions, governance participation, feedback loops, and measurement systems tied to business outcomes.

Across all leadership roles, the direction is consistent: AI value now depends on implementation discipline. Organizations need orchestration expertise, governance frameworks, workflow redesign, and operating model support to operationalize these changes successfully.

Knowledge 2026 pointed to a new operational phase of enterprise AI

Knowledge 2026 revealed an enterprise AI market moving toward operational systems built around orchestration, workflow integration, governance, and execution architecture.

That shift changes the competitive landscape. Enterprises will increasingly differentiate through their ability to coordinate AI-enabled execution across systems, govern workflows responsibly, and adapt operating models without disrupting the business.

For many organizations, that will require practical guidance across orchestration strategy, governance design, workflow modernization, and enterprise adoption. The next competitive advantage may come from how effectively enterprises orchestrate execution around AI.

Prepare your enterprise AI operating model for what comes next

/imagServiceNow Knowledge 2026 made one thing clear: enterprise AI value now depends on more than deploying new capabilities. Leaders need orchestration strategies, governance models, workflow integration, and operating models that support AI-enabled execution at scale.

The AI Strategy and Transformation Workshop helps enterprise leaders assess readiness, identify high-value opportunities, and define a practical path for responsible AI transformation across real workflows.

Frequently asked questions about ServiceNow Knowledge 2026

What happened at ServiceNow Knowledge 2026?

ServiceNow Knowledge 2026 focused heavily on agentic AI, orchestration, governance, and AI-enabled execution systems. The event highlighted how enterprises are moving beyond isolated AI tools toward coordinated workflows, runtime governance, and operational models designed to support AI at enterprise scale.

What is agentic AI in ServiceNow?

Agentic AI refers to AI systems that can coordinate actions, complete multi-step workflows, retrieve information, and support execution across enterprise systems. At Knowledge 2026, ServiceNow positioned agentic AI as part of a broader operational framework focused on orchestration, governance, and workflow integration.

Why is orchestration becoming important in enterprise AI?

Enterprises increasingly operate across multiple AI models, platforms, workflows, and data environments. Orchestration helps coordinate those systems so organizations can maintain operational continuity, reduce fragmentation, apply governance consistently, and support AI-enabled execution without rebuilding workflows around every technology change.

What is ServiceNow Action Fabric?

ServiceNow Action Fabric is an orchestration framework designed to connect AI agents, workflows, and enterprise systems across different platforms. It supports coordinated execution and interoperability without requiring organizations to migrate every workflow or knowledge source into a single environment.

Why are enterprises concerned about multi-LLM environments?

Many organizations already use multiple AI providers such as OpenAI, Claude, and Gemini alongside embedded AI capabilities inside enterprise platforms. That creates concerns around interoperability, governance, architecture complexity, employee experience consistency, and long-term operational adaptability.

What is AI Control Tower in ServiceNow?

AI Control Tower is ServiceNow’s governance and oversight framework for enterprise AI operations. It focuses on runtime governance, policy enforcement, operational visibility, auditability, and accountability across AI-enabled workflows, agents, and execution systems.

How is AI changing enterprise operating models?

AI is changing how enterprises design workflows, coordinate decisions, manage governance, and scale execution across the organization. Many leaders are redesigning operating models around AI-enabled workflows, orchestration layers, and governance structures that support responsible automation and human accountability.

What should enterprise leaders prioritize after Knowledge 2026?

Enterprise leaders should prioritize orchestration strategy, governance integration, interoperable workflow infrastructure, and operating model readiness. Organizations that prepare early for AI-enabled execution can adapt more effectively as enterprise AI ecosystems continue evolving.